Wanice Alfes

Regional AI desings to reduce operational risk in high-regulation environments.

TO AI ADVANCE WE MUST ENGINE THE RIGHT PATHS.

ViSP-Lab

Background

ViSP-Lab´s concept initiated in 2022 at FHWein (Vienna) with Wanice Alfes´thesis on Doctor-to-Doctor Communication in The e-Health Era. Later, the methodologies were advanced at Harvard with the papers The Global Burden of Mental Illnnesses, and E-Governmentality and Influencers as Charismatic Authorities, receiving faculty endorsement. ViSP-Lab R&D though cristalized in her last paper Exploring AI-Human Trust and Ethical Interaction Design - A Multidisciplinary Perspective at MIT CSAIL.

Trust acts like gravity in complex systems. It's predictive, non-linear, and strategic. We can engineer trust to build stable relationships, shape perception, and support acceptance.When trust is absent, systems demand constant justification. Thus, enhancing explainability as a compensatory and defensive mechanism to manage uncertainty.We shape trust to match regional meanings and expectations. This includes cultural, semantic, and behavioural contexts - creating real operational trust.

Ethical AI models face high cross-cultural barries

BECAUSE TRUST IS NOT ABSTRACT.

We can model and optimize trust dynamics to reduce relational friction across regional enterprise contexts

The Law of Gravity for Trust

Explanability alone do not solve cross-regional misalignments.

We must engineer trust.

This is the essential value gap addressed at ViSP-Lab

The Law of Gravity for Trust

It is an experiment to push the frontiers in Trust and other ethical rethoric concepts. It reduces distance, amplifies resonance, and stabilizes cooperation.

The goal: De-risking systemic fragmentation through terms, meaning and indivudual perception when interacting with AI-Solutions.

Variables in the Trust Equation

Fc ⟶ Force of Trust

Ms ⟶ Multidisciplinary Symbolic Capital

Mr ⟶ Resonance of Meanings

d ⟶ Relational Distance

Trust is still treated as an intangible variable. Most designs overlook the fundamental parameters that govern its acceleration or collapse. As relational distance increases, trust forces diminish. We build trust based on regional standards using Cognitive-Engineering models from the METAP4-Method and the AI Regional-Centric System.

ViSP-Lab analytical domains:

-

REGIONAL AI MODELS FOR ENHANCED SYSTEM STABILITY

-

COGNITIVE RELIABILITY

-

TRUST INFRASTRUCTURE

We support industries in cross-regional deployment and high-regulation environments.

ViSP-Lab - The 4 Core Pillars

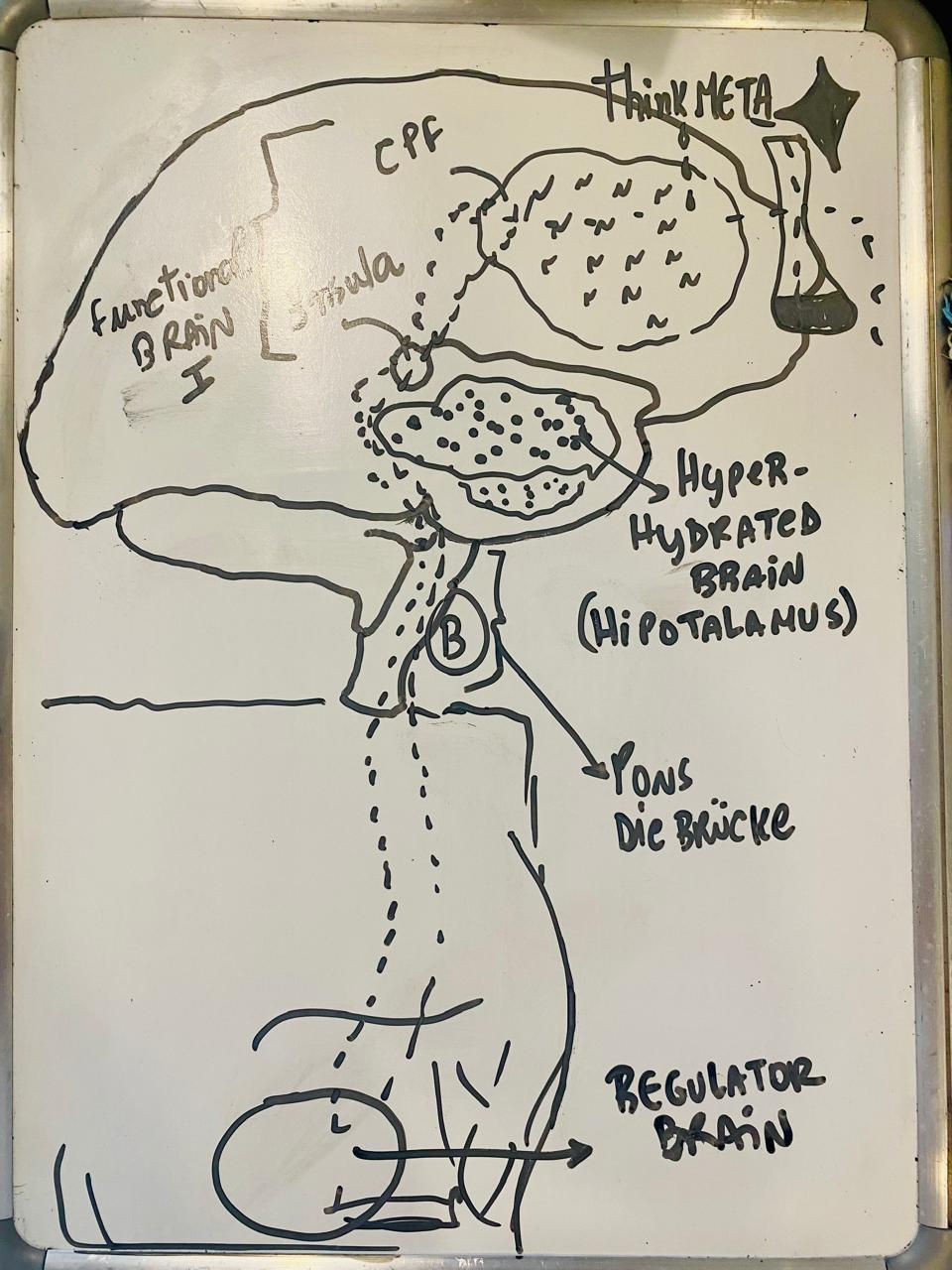

1 – The METAP4-Method

Applied framework for ethical human-AI models based on the 4Ps of medicine (ResearchGate, 2025).

2 – AI Regional-Centric System

From the globalization paradigm to regionalization (Université Grenoble Alpes, 2023).

3 – Cooperative Eusystem

Organizational model based on the principles of Vespidae cooperation (Cambridge, 2017).

4 – Applied Diversity

Action-oriented value creation beyond identity-related categories

(Hellerstedt, Uman & Wennberg, 2024 — Fooled by Diversity?).

DIE BRÜCKE - THE BRIDGE

Trust is no more a discipline. It is now an urgent framework for regional alingments. One AI foundational design system. But different rules and meanings.

Interdisciplinarity is not an option aynmore. It is a mandatory attribution in the AI revolution. ViSP-Lab bridges theory with applied models - we call this project as Die Brücke.

Die Brücke bridges:

-

German precision.

-

Swiss innovation.

-

Brazilian scalability ecosystems.

-

U.S. knowledge transfer.

for ethical pilot programs in Regional Human–AI models designed for safety, replicability, and operation under EU-GDPR standards.

ViSP-Lab R&D

Interdisciplinary Lab-Framework for Cognitive Engineering

Timeline:

2023-2024 (Conceptual)

2025 (Deployment)

2026 (Bad Homburg Installation)

ViSP-Lab works under a Cooperative Eusystem: computational scientists, cyberpsychologists, social physicists, neuroscientists, bio-engineers, cyber-communication strategists, health experts, thinkers, and creatives. Join ViSP-Lab to Human-AI advance: alfes@visp-lab.com

The hidden risk

It is no longer about technological capability.

It is misalignment between AI models´ speed, governance, and cognitive load under bias and dissonant meanings.

systems are accelerating faster than human

trust architectures can adapt

THIS IS THE TERRAIN ViSP-Lab IS BEING BUILT FOR.

TIME RUNS OUT

Wanice Alfes welcomes:

paper exchange

conceptual workshops

closed-room discussions

pre-institutional collaborations

with AI hubs

research groups, and senior scientists

working on regional Human–AI systems, trust, and governance.